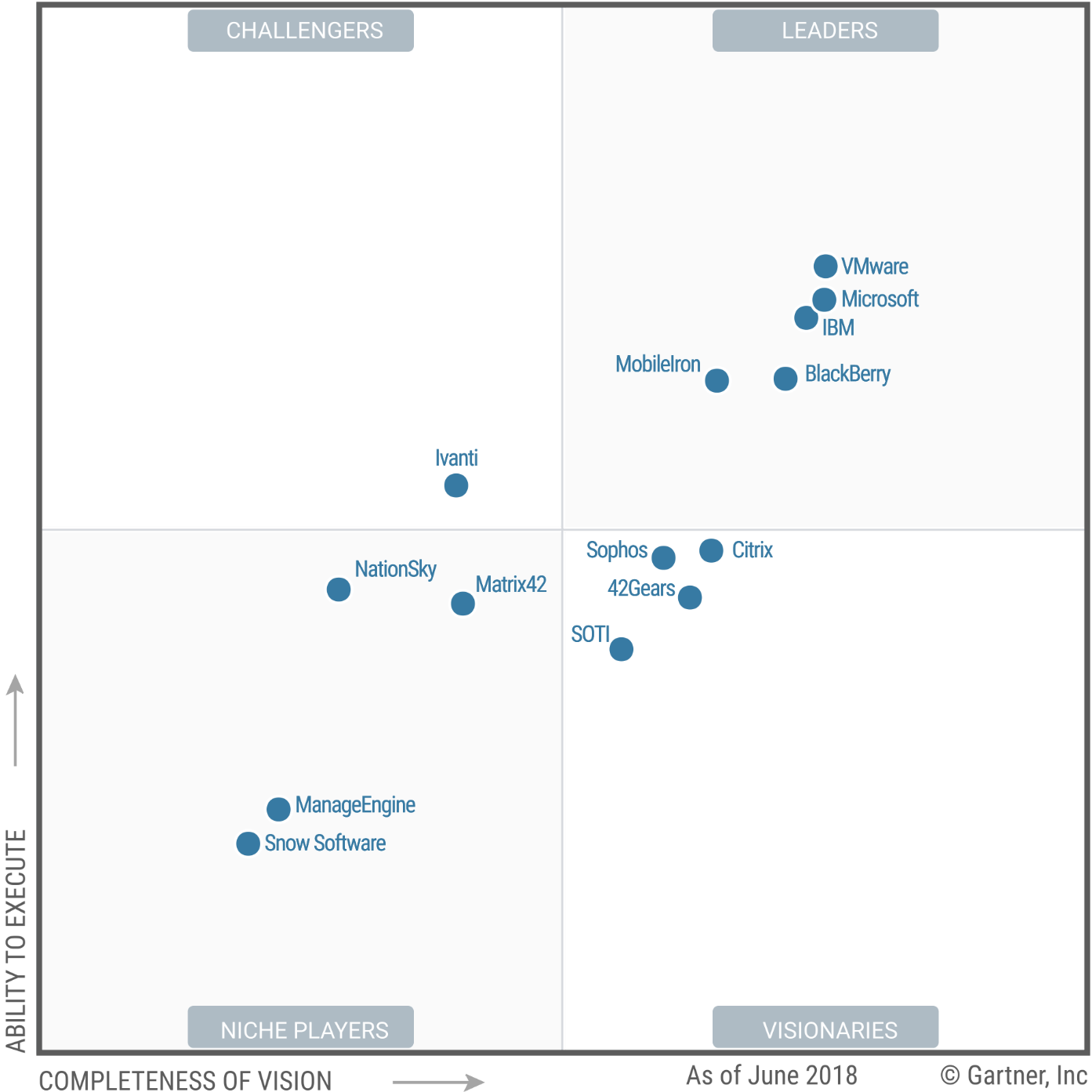

This is good because FTP is universal, it's used in so many places and has so much support across systems. Private message me or comment if you want any more input on my research in this sector. Considering you are specifically looking for a commercial product this is your best bet. You'll notice Aspera in the list, but consider CFI for your needs. Īt the end of the day, get the Gartner Magic Quadrant Report for this, review it and choose a vendor that meets your needs. What you are really after is a semi new term in the industry called Managed File Transfer.

The transfers I have setup in the past are anywhere from small <1kb files to hundreds of GBs of data, from people don't get paid if the transfer doesn't happen to data that may never even be used, from open internet transfers to encrypted data, across encrypted transfers across encrypted VPNs. While I do not know your exact requirements I will provide some possible solutions for you, if you can clarify on exactly your needs (WAN, LAN, file size, number of transfers daily, importance of transfers, etc) I can provide you with a more accurate answer.

When i think of reliability I do not think of windows, but a lot of the time there just simply isn't a way to avoid it. Multiplying the troubleshooting across different configurations can make simple tasks very frustrating. Make every server regardless of OS/config use the same process to get files to and from nodes. From what it sounds like the most important thing you are after is reliability of file transfers.įirstly I would recommend on some sort of standardization of platforms, maintenance and management, which is what it sounds like you are looking at doing. Ultimately my answer would depend on your exact requirements. I have done a lot of file transfers including EDI, FTP, AS2, FTPS, SFTP, rsync, SCP, aspera, svn, etc. I have not used MQ File transfer edition so I cannot comment on this. (Without centralized logging, I can't give a better number.) The binary files probably average around 100 MB, the metadata quite a bit smaller. Robustness is definitely our major concern. The destination is a set of AIX servers that ingest the images into an archive, so the files don't remain in the destination either. We also have sources that are FTP or SFTP servers that receive the same payloads from external parties. The sources are primarily a handful of Windows file servers, or Windows SFTP servers, that receive these files from scanning processes. We wait until a 3rd control file is written with the checksums - when the move is complete, the control file is deleted. edit - In my situation, we're moving 2-file payloads (one binary, one metadata) of scanned images from different sources to different destinations. Just trying to get a handle on the product space from the perspective of other admins. I have no idea what our budget is for this, yet. Has anyone used MQ File Transfer Edition? Another team in our company is using Aspera, does anyone have any thoughts on that, or other favored products? We're looking into commercial products to do this. There's a lot of servers, and our scripts don't have central logging or a dashboard/console/etc. We have some homegrown scripts to do this, but they don't always work properly, and troubleshooting, maintenance, and log review is not easy this way. The action is often initiated on our servers to get the files, so we need to make sure they're done being written. We've got several processes that move files across servers - SFTP, FTP, SCP Windows, Linux, AIX there is a workflow component (usually require a control file with filenames and hash values to move a batch of related files).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed